Immersive Display PRO – Dynamic Head Tracking

Immersive Display PRO – Dynamic Head Tracking

This blog describes an advanced topic for a multi-projector edge blending system with real-time head tracking for simulation environments.

In a traditional multi-projection system for simulation environments, the position of the “actors” in the projection setup is fixed and does not change. For example, in a flight/car/boat simulation setups, the position of the person participating in the simulation is fixed and does not change. The person sits or stands in a fixed position with respect to the projection screen.

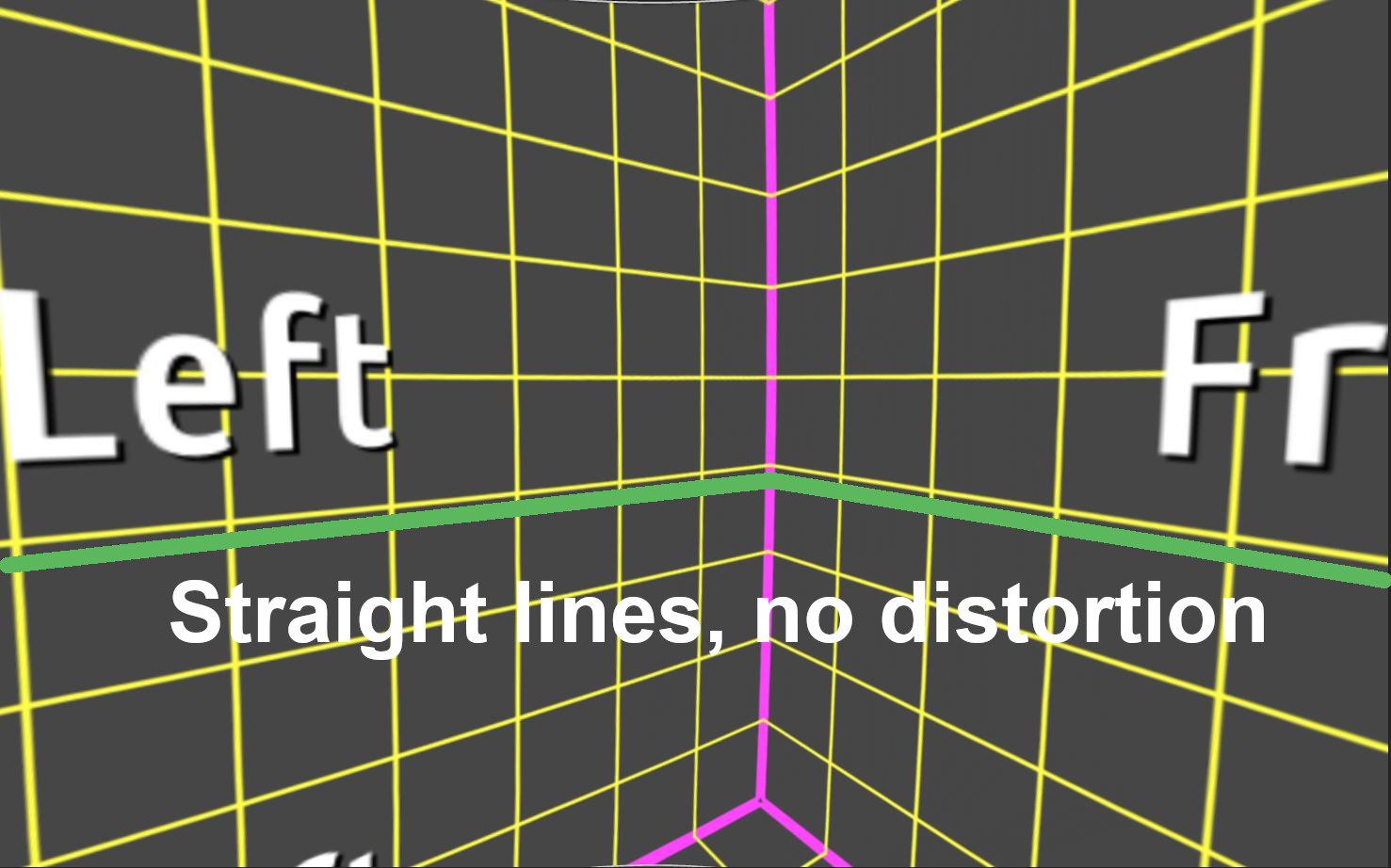

Fly Elise-ng Immersive Calibration PRO calculates the perspective views for each projector/channel so that auto-aligned and edge-blended images on the projection screen are 100% geometrically correct and with no distortion.

However, in a projection and simulation setups where the actor can move and change his position, the things are getting more complicated.

First, the simulation software or the image generator software needs to be informed about the position change of the real actor, and second, the geometrical correction and edge-blending software has to be notified about the same position change. Because the 3D position changes can happen in real-time, both the simulation software (image generator) and Immersive Display PRO (warping and blending) software have to be informed at the same time to make sure that the edge-blending images in the projection screen are 100% geometrically correct at all the times.

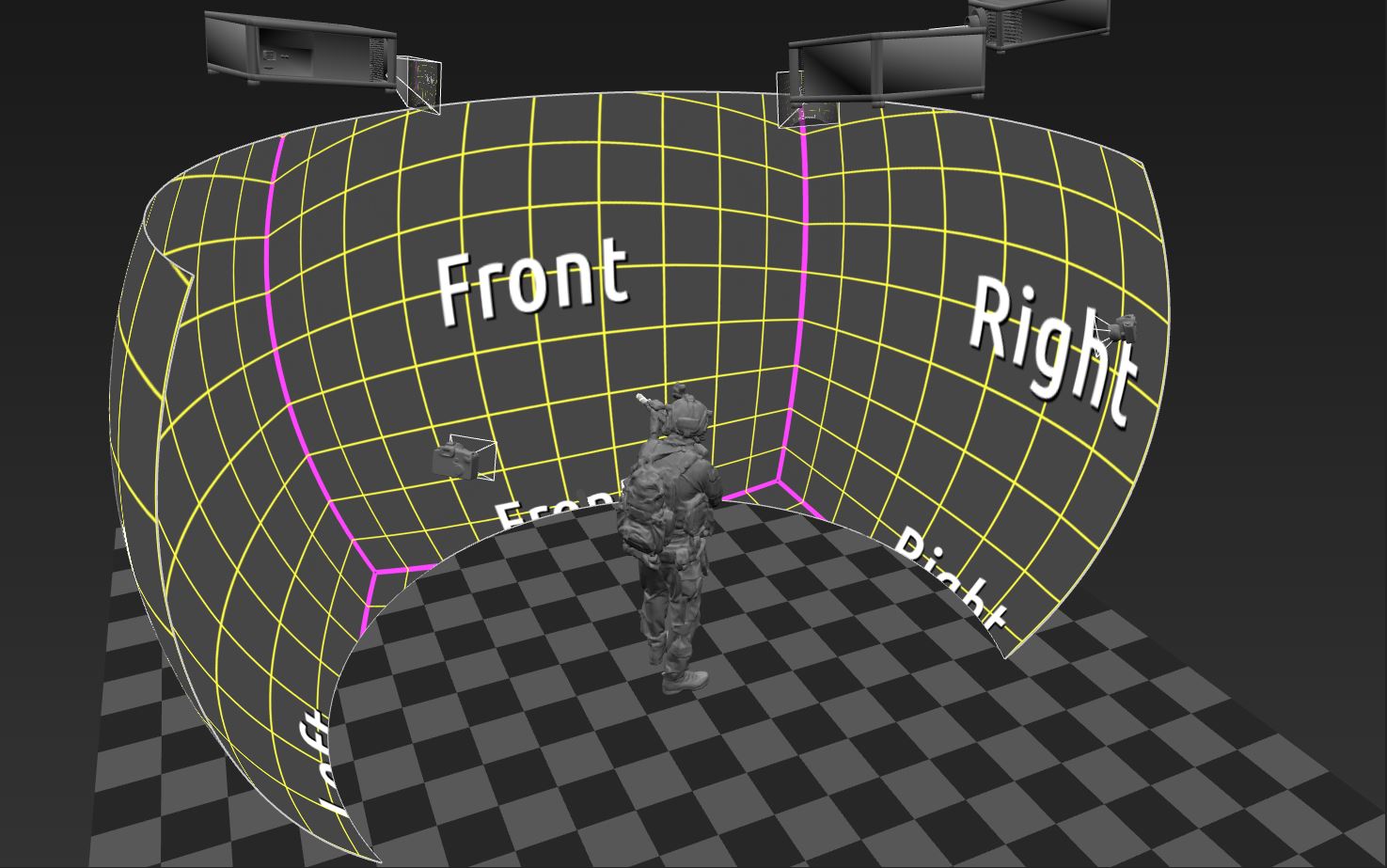

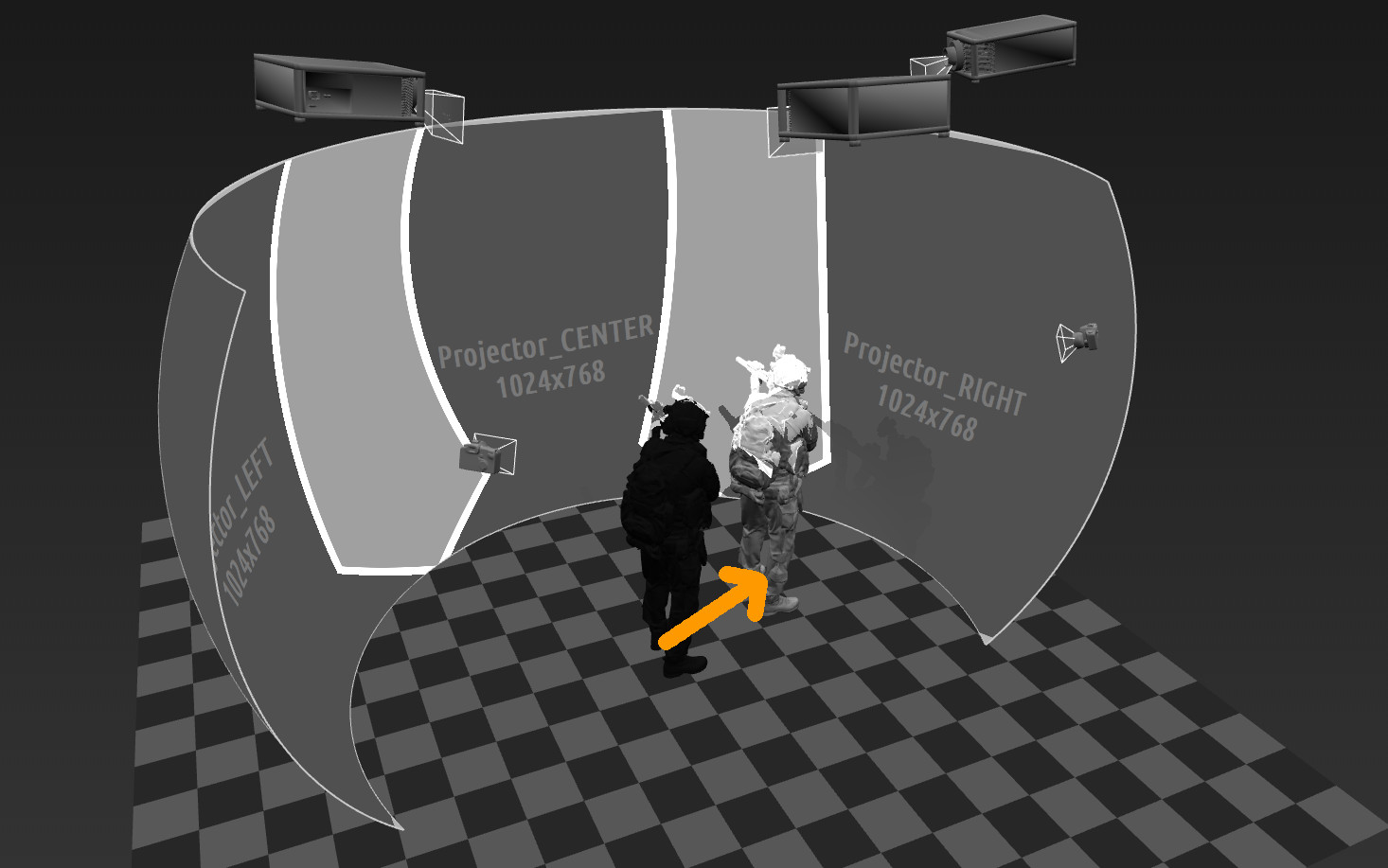

This will be illustrated in the following setup. For this setup we will use a partial dome projection screen with 3 overlapping and edge blended projectors.

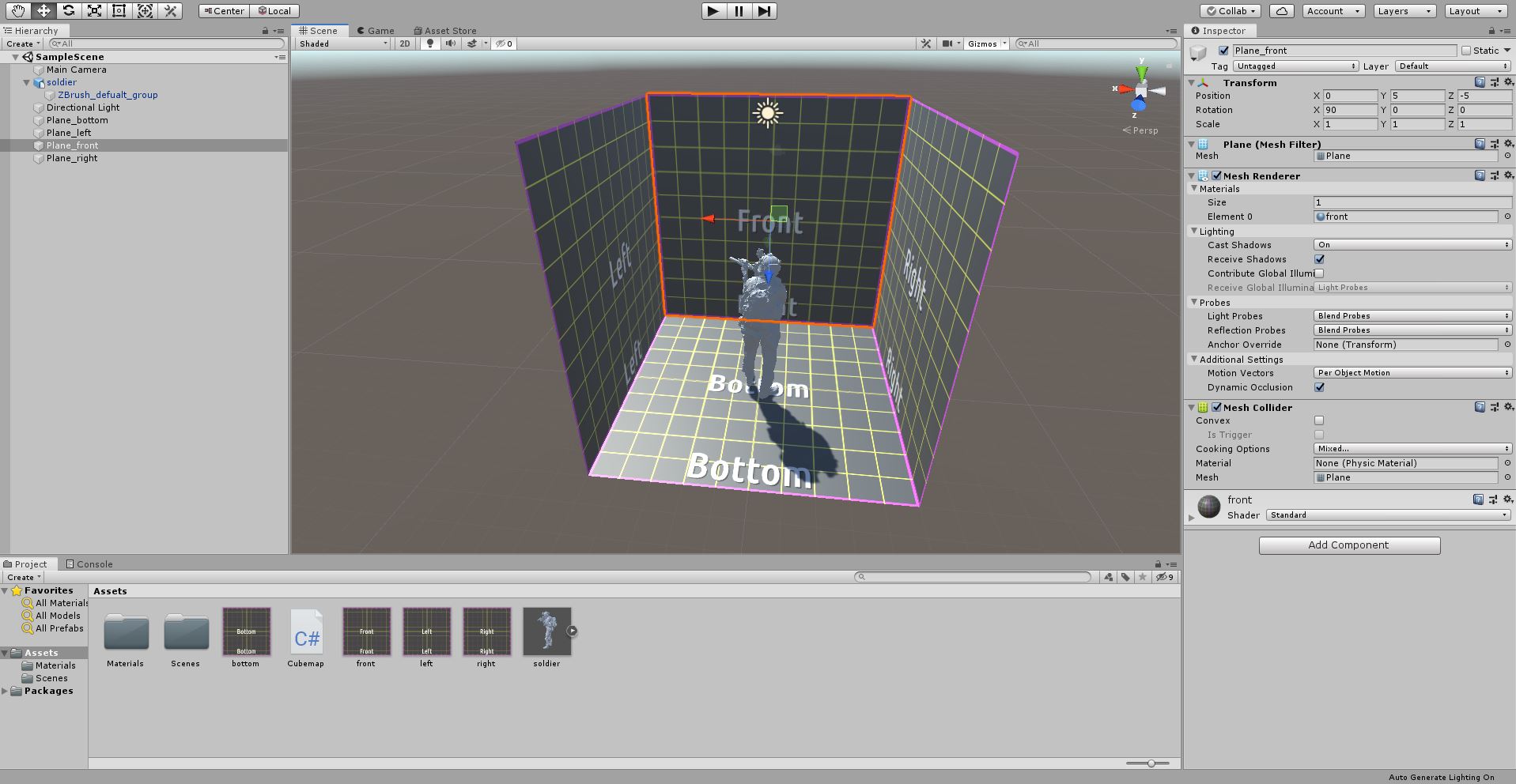

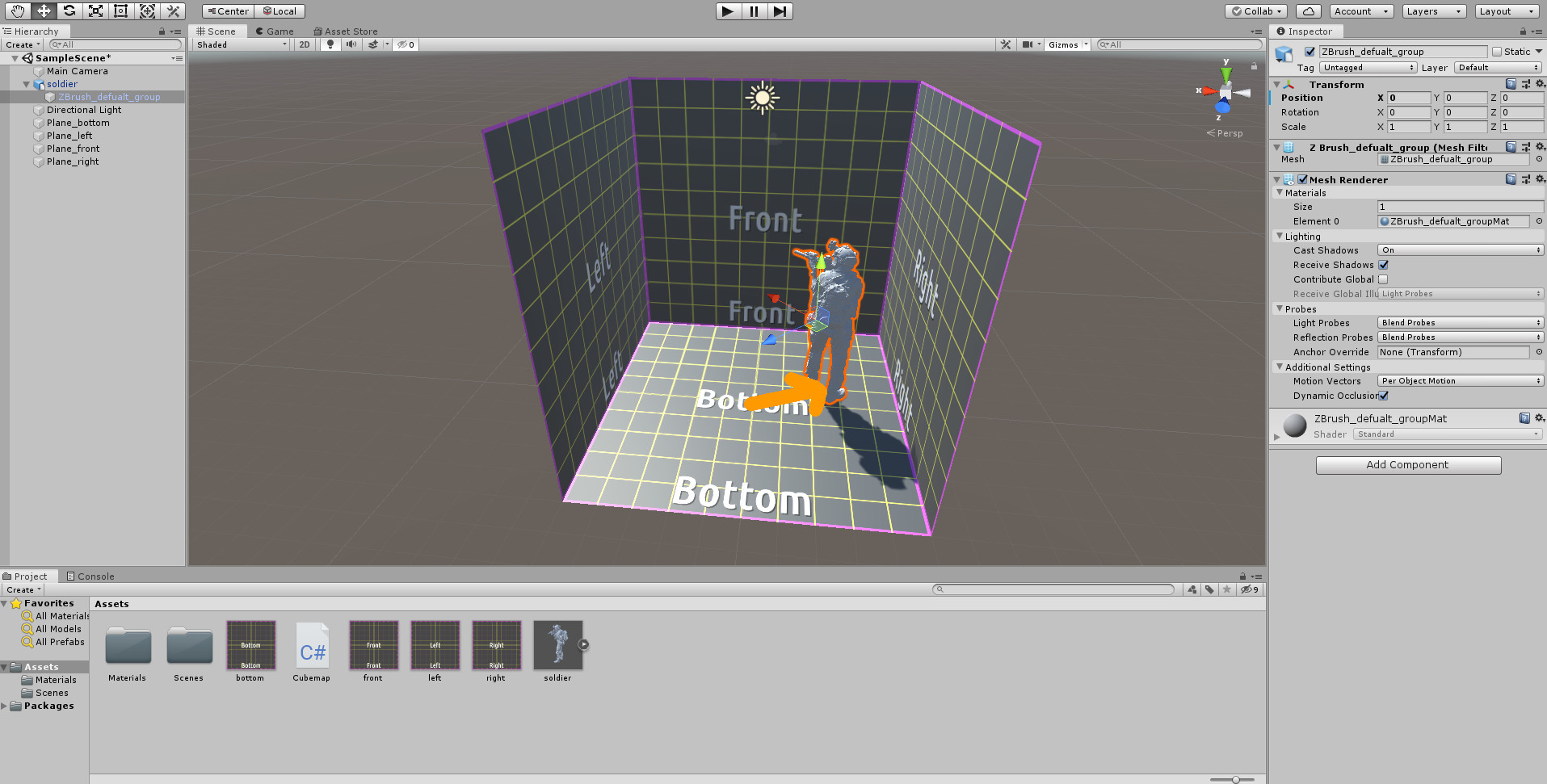

A military simulation and training software will be created in Unity, and auto-alignment will be performed with Immersive Calibration PRO. Immersive Calibration PRO will generate the multiple Unity camera views from the reference eye-point position specified in Calibration PRO.

A soldier will be positioned in the real projection setup at the reference eye-point position. The projectors will project perfectly aligned and edge-blended images on the projection screen.

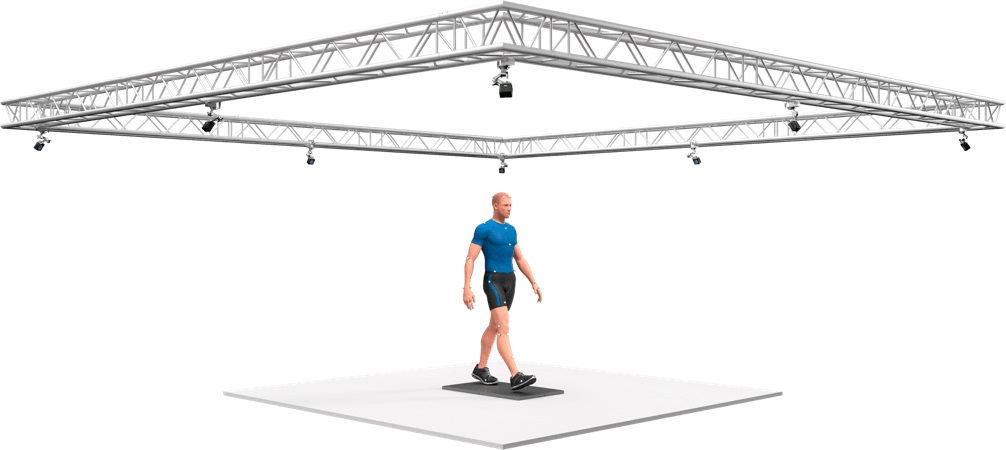

However, in this setup, the soldier can freely move in the area of the projection screen. In order to track the soldier movement, a motion tracking system is used. This system can be based on set of opti-track cameras (https://optitrack.com/) or a HTC Vive tracker system (https://www.vive.com/eu/vive-tracker/)

In both cases, the motion tracking system will track the 3D position of the soldier (in real-time) in the area of the projection screen.

Whenever the soldier moves, the motion tracking detects the new 3D position. This 3D position is used to set up and move the virtual camera in the image generator and simulation software (Unity) , as well as to inform the edge blending software (Immersive Display PRO).

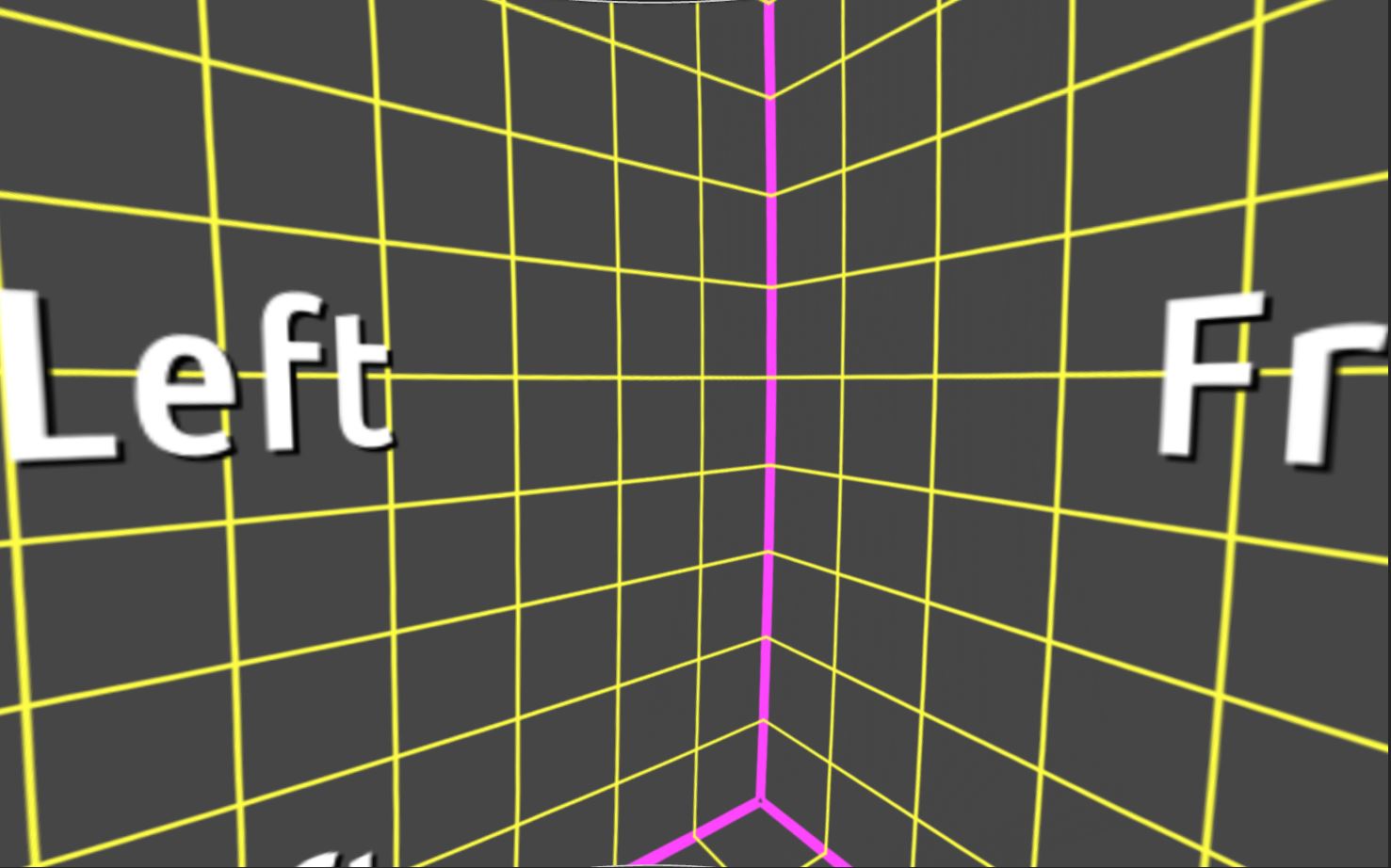

The second step is very important in order to ensure the 100% geometrical correction. Failing to correct the position change in Immersive Display PRO will result in image distortions when the soldier moves even when the images generated by unity are geometrically correct.

Let’s demonstrate how this works.

After the initial edge-blending and perspective views calculation in Immersive Calibration PRO, Unity is setup with the calculated camera views and Immersive Display PRO is configured with the exported .procalb files. The images on the projection screen observed from the eye-point of the soldier standing at the designed eye-point position are perfectly aligned.

Now, the soldier moves in the projection setup to explore the simulated training environment.

This movement is detected and tracked by the motion tracking system. The motion tracking software reports the new 3D position of the soldier. This new 3D position is sent to Unity to instruct Unity to move the unity camera in the new 3D position.

This will make sure that Unity generates the perspective views that correspond to the new 3D position of the soldier and the soldier sees the images on the projection screen according to his new 3D position.

However, looking at the projection screen from the soldier eye-point position, although the images are generated for the correct 3D position, the images look distorted.

This is because the warping and edge-bending have been calculated for the initial position of the soldier. When the soldier moves, the warping of the images projected on the curved screen has to be corrected for the new eye-point position.

Meet the Immersive Display PRO head-tracking interface…

This interface can be used to send the 3D position of the soldier detected by the motion tracking system simultaneously to Unity and to Immersive Display PRO in real time. For each detected 3D position Immersive Display PRO will correct for the new eye-point in real-time and will make sure that the warped images on the screen are geometrically correct for each eye-point 3D position of the soldier.

The soldier can move freely in the scene and the combination of the head tracking system, Unity and Immersive Display PRO will ensure in real-time that the soldier always observes undistorted and 100% geometrical correct images in the projection screen.

The real-time head tracking and warping correction in Immersive Display PRO is available for DirectX11 , DirectX12 and OpenGL graphics frameworks.

Contact us for more information on how to access and use the Head Tracking Interface.

We`re here to help!

Office

Waterstad 31

5658 RE Eindhoven

The Netherlands

Hours

M-F: 8am - 10pm

S-S: Closed

Call Us

+31 40 7114293

Support

support@elise-ng.net